Almost every professional you ask will tell you that networking is one of the most valuable parts of their work. Almost no one can tell you, with any precision, what it has produced for them in the past year. The activity is real; the measurement is anecdotal. The result is a category of work that everyone agrees matters but no one feels confident defending when it gets cut.

The reason is partly cultural. Networking has always been a soft activity in industries that measure hard outputs. But it is also methodological: the right measurements are subtle, the wrong measurements are misleading, and most professionals have never been trained on either. Surveys of B2B sales organizations consistently find that only a minority track networking-sourced pipeline as a distinct category, despite networking being self-reported by the majority of senior reps as their highest-yield lead source.

This guide is a practical framework for measuring networking ROI—at the individual level, the team level, and the organizational level. It distinguishes between vanity metrics (numbers that feel productive but tell you little) and value metrics (numbers that actually inform decisions). And it gives you a workable system for capturing the data without building a new analytics function.

Why Networking ROI Is Hard to Measure

The standard ROI formula is straightforward: outcome value divided by cost. The difficulty in networking is that both the outcome and the cost are time-shifted, partially attributable, and often invisible.

Time-Shifted Outcomes

A relationship formed at a conference in March may produce a deal in October. The connection between the two is real, but in any monthly or quarterly view, the activity and the outcome show up as separate events. Most attribution systems are not patient enough to connect them, and most reps do not log the linkage themselves.

Partial Attribution

The deal that closes in October was probably influenced by the conference connection in March, but also by the LinkedIn post the buyer saw in June, the case study they read in August, and the introduction from a mutual connection in September. Naive attribution gives 100% credit to the last touchpoint. Sophisticated attribution gives weighted credit across the journey, but the data to do this well is rarely captured at the networking-event level.

Invisible Outcomes

Some networking outcomes are not deals at all. They are warm introductions to other prospects, hires made, partnerships formed, advice received, or reputation built. These have real economic value but rarely appear in any analytics system. They are usually mentioned anecdotally and forgotten when budgets get reviewed.

The combined effect is that the organizations and individuals who network most effectively often have the worst documentation of the value they produce. The activity speaks for itself in the rep’s own pipeline; it does not speak for itself in a CFO’s spreadsheet.

The Three-Layer Measurement Framework

A workable measurement system has three layers, each answering a different question. Most teams skip directly to layer three (pipeline value) and find it useless because they have not built layers one and two. The layers are sequential.

Layer 1: Activity (What Did I Actually Do?)

The most basic layer. Counts of networking activities undertaken. Examples:

- Number of contacts captured at events.

- Number of new connections made via warm introduction.

- Number of meaningful conversations beyond a 30-second exchange.

- Number of LinkedIn connection requests sent and accepted.

- Number of digital business card shares.

Activity metrics are the easiest to capture and the easiest to misuse. They are necessary because without them you cannot tell whether the system is being used; they are insufficient because they tell you nothing about quality.

Treat activity metrics as a hygiene check, not a success metric. If activity is zero, something is wrong. If activity is high but layers two and three are flat, you are doing something busy but not useful.

Layer 2: Engagement (Did Anything Happen After?)

The middle layer. The proportion of activity that produced any subsequent meaningful action. Examples:

- Save rate: of contacts who received your card, what fraction added you to their phone or CRM.

- Reply rate: of follow-ups you sent post-event, what fraction received a reply.

- Meeting conversion: of new contacts, what fraction agreed to a follow-up meeting within 30 days.

- Connection acceptance rate on LinkedIn after meeting in person.

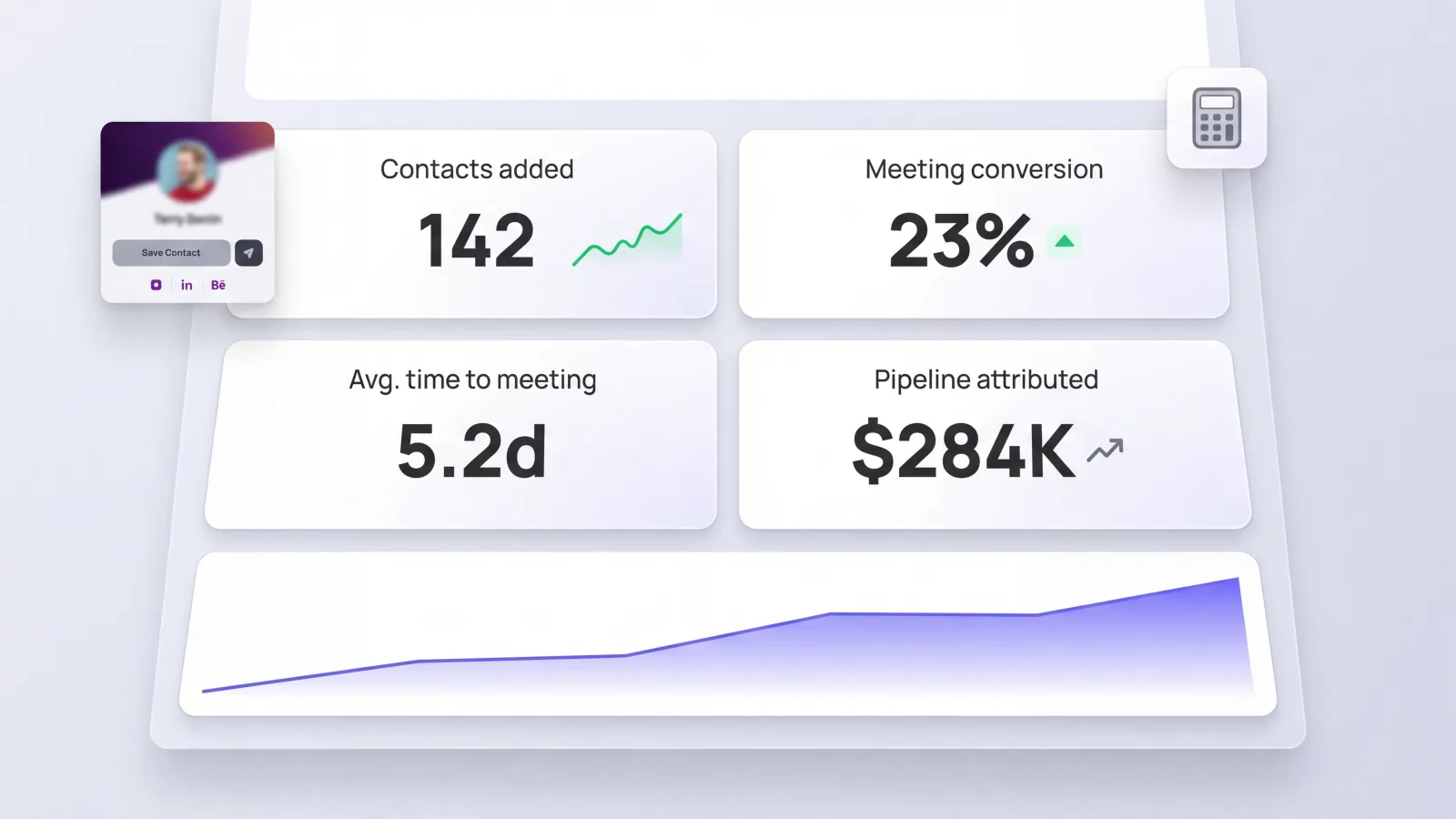

Engagement metrics tell you whether your activity is producing relationships rather than just exchanges. They are the layer where most of the actionable signal lives. A rep with 200 captured contacts and a 5% meeting conversion is producing 10 meetings; a rep with 100 captured contacts and a 25% meeting conversion is producing 25. The second rep is networking better, even though the first is networking more.

This is the layer where the difference between a good networker and a mediocre one becomes visible.

Layer 3: Outcome (What Did the Relationships Produce?)

The top layer. The downstream value created by the relationships that engagement produced. Examples:

- Pipeline value attributed to networking-sourced contacts.

- Closed-won deals attributed to networking-sourced contacts.

- Meetings sourced through warm introductions from existing contacts.

- Hires made through the personal network.

- Partnerships formed.

- Inbound opportunities (calls, emails, referrals) sourced from people in the network.

Outcome metrics are the most valuable but also the most time-shifted and most attribution-sensitive. They should be reviewed quarterly or annually rather than weekly. Trying to measure them in shorter cycles produces noise and discourages the patient work that produces the best outcomes.

The right pattern is: track activity weekly, engagement monthly, and outcomes quarterly. Each layer informs the one above it.

The Specific Metrics Worth Tracking

Across the three layers, here are the specific metrics that tend to produce the most insight relative to the effort of capturing them. Treat the benchmark ranges below as starting calibration points based on what consistently shows up across B2B teams—not absolute targets. Your industry, sales motion, and event mix will shift the right numbers up or down.

Contacts Captured per Networking Hour

Definition: Total new contacts added to your system divided by hours spent in networking-eligible activities (events, conferences, planned coffees, intentional outreach).

Why it matters: A simple efficiency metric. Most professionals dramatically overestimate how many contacts they make per hour of networking. The number is almost always lower than memory suggests, and the gap between perception and reality is itself useful feedback.

Benchmark: 4 to 8 captured contacts per hour at a busy conference is good. 1 to 3 per hour at a typical networking event is normal. Below 1 per hour is a signal that the events you are attending are not networking-rich, regardless of how enjoyable they are.

Save Rate

Definition: Of the people who received your contact information, the fraction who saved it (added you to their contacts, accepted a connection request, or otherwise indicated they wanted to maintain the relationship).

Why it matters: Distinguishes between a contact you handed your card to and a contact you actually formed. A high save rate suggests your introduction is landing well; a low save rate suggests the contact is forgettable or your card is unappealing.

Benchmark: 60% to 80% save rate is typical for warm meetings; 30% to 50% for incidental conference exchanges; below 20% suggests something is wrong with the introduction.

Time to First Meaningful Reply

Definition: The median time between the initial contact (event introduction, cold outreach with a warm reference) and the first substantive reply from the contact.

Why it matters: A sensitive indicator of how warm the relationship actually is. Cold contacts produce replies measured in days or weeks if at all. Warm contacts produce replies within hours. The metric correlates strongly with downstream conversion.

Benchmark: Under 24 hours: very warm. 24 to 72 hours: warm. 72 hours to 7 days: lukewarm. Beyond 7 days: cold.

Meeting Conversion Rate

Definition: Of new contacts captured, the fraction who agreed to a follow-up meeting within 30 days.

Why it matters: The clearest signal that a contact is moving toward becoming a relationship rather than a stagnant entry in your CRM.

Benchmark: 15% to 30% from cold conference networking is good. 40%+ from intentional, targeted networking with named accounts is good. Above 50% suggests the targeting is excellent or the bar for “contact” is too high (you are only counting people who were already going to meet you).

Pipeline Attributed to Networking

Definition: Total open pipeline value where networking activity is the recorded source of the original contact.

Why it matters: The single most credible metric for defending networking budgets to finance. Tied directly to opportunity value.

Benchmark: For sales teams, networking should produce 20% to 40% of pipeline in B2B segments where personal relationships matter. Below 10% is a signal that either the team is not networking enough, or the attribution is broken.

Closed-Won Attributed to Networking

Definition: Closed deals where networking is the recorded source of original contact, valued at the closed amount.

Why it matters: The truest ROI metric. Lagging by 6 to 18 months from the original contact, depending on sales cycle length, but the most defensible measure of dollars produced.

Benchmark: The closed-won attribution rate should approximately match the pipeline attribution rate. If pipeline attribution is 30% and closed-won is 5%, networking-sourced deals are converting worse than other sources—which is itself useful information.

Building the Measurement System

The metrics are useless if the data is not captured. Here is the practical capture system that tends to work.

Capture at the Source

The data needs to enter the system at the moment of contact, not days later. This is the single biggest reason most measurement systems fail: by the time the rep tries to log the contact, half the context has been forgotten and the entry is incomplete or never happens.

The fix is structural. The capture mechanism (a digital business card, an event app, a CRM mobile interface) has to be in the rep’s pocket and easier to use than the alternative. Lynqu’s lead-capture flow records contact details into a structured record at the moment of share, with the event and source automatically tagged. The rep does not have to remember to log anything; the structure is created by default. (For sales-team-specific use cases, see how sales teams close more deals with digital business cards.)

Tag the Source Automatically

Every captured contact should know where it came from. “Conference XYZ 2026,” “LinkedIn DM,” “Warm intro from Alice,” “Email signature click.” This tagging is what makes attribution possible later. UTM parameters on QR codes and tracking links produce the source data automatically; manual tagging on rep entries fills in the gaps.

Sync to the CRM

The captured contact has to flow into the system where the rest of the pipeline lives. A contact that exists only in the rep’s digital card and never reaches Salesforce or HubSpot will not appear in any pipeline analysis, regardless of how excellent the original capture was.

Add Light Annotation

One field worth adding to every captured contact: a one-sentence note about the conversation. “Discussed Q3 expansion in EMEA, send case study X.” The note is the difference between a contact you remember and a contact you have to reconstruct. It also serves as the basis for follow-up content; reps with strong note-taking habits convert at noticeably higher rates than reps without.

Review Quarterly, Not Weekly

The whole system should be reviewed at the team level once a quarter, not more often. Activity is reviewed weekly because the cycle is short. Engagement is reviewed monthly because conversations move on month-long timeframes. Outcomes are reviewed quarterly because that is the cycle on which deals close. Trying to do all of this weekly produces noise and discourages the patient activities that drive long-term results.

What Vanity Metrics Look Like

Some of the most commonly reported networking metrics are vanity metrics. Recognizing them is half the battle.

Total LinkedIn Connections

The count of connections in a network tells you almost nothing about that network’s value. A network of 5,000 mostly-irrelevant connections is worse than a network of 500 closely-aligned ones. The metric optimizes the wrong thing: volume rather than relevance.

Number of Events Attended

Attendance is presence; presence is not productivity. The metric should always be paired with engagement and outcome data. Otherwise “attended 12 conferences” sounds productive while being meaningless.

Number of Cards Handed Out

This was always a vanity metric but is even more so in the digital age, where the marginal cost of sharing is zero. A rep who shared their card 500 times last quarter has done very little if 480 of those shares produced no save and no follow-up. The metric to watch is the save rate, not the share count.

Cost per Contact

Almost always a misleading metric in isolation. Some of the most expensive contacts (named-account meetings at exclusive events) produce 100x the value of cheaper contacts (random conference exchanges). Cost should be evaluated against the eventual outcome, not against the count of contacts produced.

Common Mistakes

Measuring Only What Is Easy to Measure

Activity metrics are easy. Outcome metrics are hard. The temptation is to focus on what the system can produce automatically, which leads to a system that optimizes for measurable activity instead of valuable activity. Resist this. The metric you most want is usually one of the harder ones.

Single-Touch Attribution

Treating the source of the original contact as 100% responsible for the eventual deal ignores everything that happened in between. Use multi-touch attribution where possible, or at minimum acknowledge that the network gets credit for an opportunity that may have been closed by other touchpoints.

Confusing Lag With Failure

A relationship formed at a conference in March may not produce visible value until October. A monthly review that does not see immediate output may conclude the conference was a waste, when in fact the value is still developing. Always look at networking outcomes on at least a 6-month rolling window.

Forgetting the Non-Pipeline Outcomes

Even the most rigorous pipeline-based attribution misses warm introductions, hires, partnerships, advice, and reputation effects. A complete networking ROI view includes these as separate outcomes, even if they are not dollar-quantified. Otherwise the people who network most effectively are systematically undervalued.

Letting Perfect Be the Enemy of Good

The ideal measurement system has multi-touch attribution, six months of trend data, manually annotated context on every contact, and weekly review. Most organizations cannot build this immediately. A simpler version—activity, save rate, meeting conversion, and pipeline attribution—captured consistently, produces 80% of the value with 20% of the effort. Start there.

The Individual vs Team View

The metrics above apply to both individual contributors and teams, but the diagnostic questions differ.

For individuals: Are my activity, engagement, and outcome metrics consistent over time, or trending in a particular direction? Where is my conversion lowest, and what could I change about my approach? Which of my contacts have produced multiple downstream introductions, and what made those relationships different?

For teams: Which reps consistently outperform on engagement metrics regardless of activity volume? What are they doing that others can learn from? Which events produce the highest yield per dollar invested, and which are vanity attendance? How does networking attribution compare to other lead sources at similar maturity?

The team view enables resource decisions: where to invest event budget, who to send, what to coach. The individual view enables professional development: where each rep is strongest and weakest, what specific behaviors correlate with their best outcomes.

The 90-Day Implementation Plan

If you are starting from no measurement at all, here is a sequence that produces a working system within a quarter.

Days 1 to 14: Audit current state. What is captured today, where, by whom. Identify the gap between capture and CRM. Set up source tagging on the analytics and CRM integration if it is not already in place.

Days 15 to 30: Define the four to six metrics you will track. Resist the urge to track more. Establish baselines for each metric using whatever historical data exists.

Days 31 to 60: Run the system. Capture contacts at every event with full source tagging. Sync to CRM. Add the one-sentence note discipline. Have reps review their own activity weekly.

Days 61 to 90: First quarterly review. Compare actual numbers against baselines. Identify the metric that needs the most improvement and design one focused experiment to address it.

By day 90, you have a working measurement system, real data, one targeted improvement experiment in flight, and a clear story to tell finance about what networking is producing. For event-heavy teams specifically, the conference networking guide covers the capture-and-attribution rituals that produce the cleanest data.

What This Earns You

Networking ROI is not unmeasurable; it is undermeasured. The combination of activity, engagement, and outcome metrics, captured at the source and reviewed on the right cycle, produces a defensible picture of what networking actually contributes.

The professionals and teams who measure consistently tend to also network consistently, and the two reinforce each other. Once you can see the numbers, the bias toward investment in the activity that produces them follows naturally. Once the activity is consistent, the numbers improve.

Start with the simplest possible system. Capture the right data at the moment of contact. Review it on the right cycle. The compounding return is not just in the deals that close—it is in the institutional clarity about which networking activities are worth doing more of, and which ones are just busy. That clarity is itself one of the most valuable outputs any measurement system can produce.